Data Normalization Techniques: Types For ML And Databases

Data Normalization Techniques: Types For ML And Databases

Patient records from Epic look nothing like patient records from Cerner. Lab results, medication lists, and demographic fields arrive in different formats, different structures, and different scales. If you're building healthcare applications that pull data from multiple EHRs, understanding data normalization techniques is not optional, it's the foundation that makes everything else work.

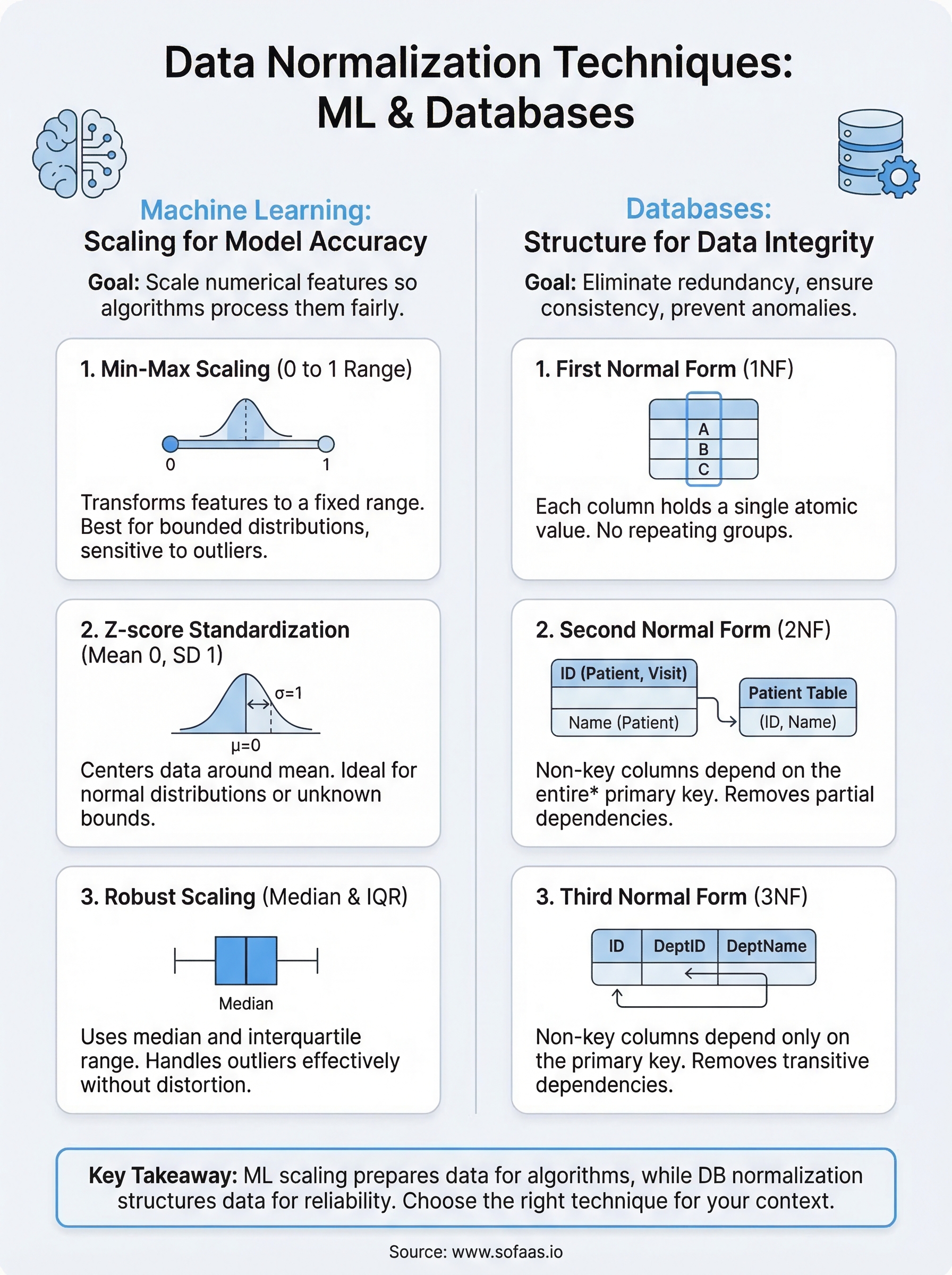

But here's the thing: "data normalization" means different things depending on context. In machine learning, it refers to scaling numerical values so algorithms can process them without bias toward larger numbers. In database design, it means organizing tables to eliminate redundancy and maintain data integrity. Both definitions matter when you're working with healthcare data at scale.

At SoFaaS, we deal with this problem daily. Our managed SMART on FHIR platform connects healthcare applications to EHRs through a unified API, which means we handle the messy work of making inconsistent data consistent. That experience gives us a practical perspective on why normalization matters so much.

This article breaks down the major normalization techniques across both domains, from Min-Max and Z-score scaling for ML pipelines to First, Second, and Third Normal Forms for relational databases. Whether you're preprocessing clinical data for a predictive model or designing a schema to store patient records, you'll walk away with a clear understanding of which technique fits your use case and why.

Why data normalization matters

When raw data enters your system, it rarely arrives ready to use. Healthcare data is a perfect example: a patient's age might be a number between 0 and 120, their blood pressure readings might range from 60 to 200, and certain lab values can span thousands of units. Without proper normalization, machine learning algorithms weight those larger numbers more heavily, and databases store the same information in multiple places, creating inconsistencies that grow harder to untangle over time. The downstream effects range from inaccurate model predictions to broken clinical workflows, none of which you want when real patient outcomes are involved.

Skipping normalization doesn't just slow your system down; it quietly corrupts the outputs you rely on to make decisions.

In machine learning, scale determines outcome

When you feed raw, unscaled data into a machine learning model, the model has no way to know that a blood pressure value of 140 and a patient age of 40 exist on completely different scales. Distance-based algorithms like K-nearest neighbors and support vector machines treat numerical magnitude as meaningful, so a feature with a range of 0 to 10,000 will dominate a feature with a range of 0 to 1. Applying the right data normalization techniques before training ensures that every feature contributes proportionally to what the model learns.

Your preprocessing choices also affect how quickly a model converges during training. Gradient descent, the optimization method behind most neural networks, moves faster and more reliably when input features share a similar scale. When features are left unscaled, the optimizer has to navigate a loss surface that is elongated and uneven, which leads to slower training and sometimes unstable results. For healthcare applications where you're combining features like medication dosages, visit frequencies, and vitals, this is a real and persistent problem.

In databases, structure prevents data rot

Database normalization solves a fundamentally different problem: it organizes your tables so that each piece of information lives in exactly one place. When the same data appears in multiple tables without a controlled structure, updating one record leaves the others stale. Over time, your database accumulates conflicting entries that are expensive to reconcile and can cause reporting errors that undermine the clinical workflows depending on that data.

For applications that pull from multiple EHRs, this problem compounds quickly. A patient's address, insurance details, or medication history might arrive from three different systems in three different formats. If your schema isn't designed to handle these variations through a normalized structure, you end up with duplicate patient records, broken relationships between tables, and queries that return inconsistent results. Normalization gives you the structural foundation to merge and trust that data at scale.

Data normalization techniques for machine learning

The most common data normalization techniques for ML fall into a few distinct categories, each designed for different data distributions and algorithm types. Knowing which one to apply to your dataset before training will save you significant debugging time later.

Min-Max scaling

Min-Max scaling transforms your features so every value falls within a fixed range, typically 0 to 1. The formula takes each value, subtracts the minimum, and divides by the range. This works well when your data has a known, bounded distribution and you're using algorithms like neural networks or K-nearest neighbors that are sensitive to feature magnitude.

The limitation is sensitivity to outliers. If a single extreme value exists in your dataset, it compresses all other values toward one end of the range, which obscures meaningful variation in the bulk of your data.

Z-score standardization

Z-score standardization rescales your data so it has a mean of 0 and a standard deviation of 1. Instead of bounding values to a range, it centers them around the mean and expresses each value in terms of how many standard deviations it falls from that center.

This technique is the better default when you don't know the bounds of your data or when outliers are present, since it doesn't compress everything into a fixed range.

Standardization fits linear models and logistic regression well, where the algorithm performs better when features are centered. If your clinical data naturally follows a bell curve, Z-score normalization preserves that shape while making the scale uniform across all features.

Robust scaling

Robust scaling uses the median and interquartile range instead of the mean and standard deviation. That distinction matters when your dataset includes outliers, which is common with lab values or billing data. Because it ignores extreme values during calculation, it preserves meaningful variation in the middle of your distribution more reliably than Min-Max or Z-score methods. For healthcare data specifically, abnormal readings are part of the data, not errors to be filtered out.

Data normalization techniques for databases

Database normalization techniques organize your schema so each piece of data lives in exactly one place. The process works through a series of rules called normal forms, each building on the last. Most production systems target Third Normal Form, which eliminates the most common sources of redundancy and data inconsistency without over-engineering the schema.

First Normal Form (1NF)

First Normal Form requires that every column in a table holds a single, atomic value and that each row is uniquely identifiable. In practice, this means no comma-separated lists stuffed into a single field and no repeating column groups like medication_1, medication_2, medication_3. When your EHR data arrives with multiple values packed into one field, 1NF forces you to break those out into separate rows with a proper primary key.

Second Normal Form (2NF)

Second Normal Form builds on 1NF by requiring that every non-key column depends on the entire primary key, not just part of it. This matters most when you're using a composite primary key, meaning a key made up of two or more columns. If a column only relates to one part of that key, it belongs in a separate table.

Partial dependencies are the most common structural problem in schemas that started small and grew without a plan.

For example, if your table tracks patient visits and uses a composite key of patient_id and visit_date, a column like patient_name only depends on patient_id. That column belongs in a dedicated patient table, not the visits table.

Third Normal Form (3NF)

Third Normal Form removes transitive dependencies, meaning non-key columns that depend on other non-key columns rather than directly on the primary key. This is the standard target for most relational database schemas because it eliminates the update anomalies that cause records to drift out of sync. In healthcare data models, where patient, provider, and encounter records all interrelate, reaching 3NF gives your queries a reliable and consistent foundation to build on.

How to choose the right normalization technique

Selecting the right approach comes down to three things: your data's distribution, the algorithm or system you're feeding it into, and how much noise or outlier activity your data contains. No single technique covers every situation, and applying the wrong one can introduce the same problems you were trying to solve. The right data normalization techniques fit both the shape of your data and the requirements of the system consuming it.

Your choice of normalization method should follow your data's behavior, not the other way around.

For machine learning pipelines

Start by examining your data's distribution and outlier profile before picking a scaling method. If your features have a known, bounded range and no extreme outliers, Min-Max scaling is a clean, straightforward choice. If your data has outliers that reflect real events rather than data errors, like abnormal lab values in clinical records, Robust scaling protects the meaningful variation in the rest of your distribution. Use Z-score standardization as your default when you're working with features that follow a roughly normal distribution or when you're unsure about the bounds of your data.

Here's a quick reference to match conditions to methods:

| Condition | Recommended Method |

|---|---|

| Bounded range, no outliers | Min-Max scaling |

| Outliers present, meaningful | Robust scaling |

| Normal distribution, unbounded | Z-score standardization |

| Linear or logistic regression | Z-score standardization |

For database schemas

Your target normal form should match the scale and complexity of your application. For most production systems, Third Normal Form is the right stopping point. It removes redundancy without fragmenting your schema into so many tables that queries become difficult to maintain. If you're working with a simple, read-heavy dataset that rarely changes, stopping at 2NF may be acceptable. But for any schema that stores relationships between entities like patients, providers, and encounters, 3NF gives you the structural consistency that prevents data drift over time.

Common mistakes and best practices

Even experienced teams make predictable errors when applying data normalization techniques. The most damaging ones aren't obvious until they surface downstream in broken model predictions or corrupted database records. Knowing where these mistakes occur lets you build better safeguards from the start, rather than diagnosing problems under production pressure.

Mistakes to avoid

Normalizing your entire dataset before splitting it into training and test sets is one of the most common ML preprocessing errors. When you fit a scaler on the full dataset, information from your test set leaks into your training process, producing overly optimistic evaluation results that don't hold in production. Always fit your scaler on the training data only, then apply that same fitted scaler to the test set as a separate step.

Fitting your scaler on the full dataset before splitting guarantees that your model evaluation numbers will not reflect real-world performance.

Another frequent mistake in database design is stopping at First Normal Form and assuming that's enough. 1NF addresses atomic values, but it leaves partial and transitive dependencies intact. Those hidden dependencies are what cause records to fall out of sync when data gets updated across your schema, creating the exact inconsistencies normalization was meant to prevent.

Best practices to follow

Document your normalization decisions as part of your pipeline. If you apply Z-score standardization to one feature and Robust scaling to another, record those choices along with the fitted parameters. Without that documentation, reproducing your pipeline or debugging unexpected behavior becomes significantly harder, especially when team members change or models get retrained months later.

For database schemas, test your normal form compliance with real queries, not just by reviewing the design on paper. Run updates, inserts, and deletes against a sample dataset and check whether inconsistencies appear. A schema that looks clean in a diagram often reveals edge cases once actual data flows through it, and catching those before production saves you from expensive migrations.

Final takeaways

Data normalization techniques split into two distinct disciplines, and confusing them leads to real problems. For ML pipelines, your goal is to scale numerical features so algorithms treat each input fairly. For databases, your goal is to eliminate redundancy by organizing tables into normal forms that keep records consistent as data changes. Both goals share the same underlying principle: structure your data so the systems consuming it can trust what they receive.

When you're building healthcare applications that pull from multiple EHRs, these decisions stack on top of each other fast. Choosing the wrong scaling method degrades your model. Stopping short of Third Normal Form creates data drift that's painful to fix later. The right approach is to match your technique to your data's behavior and your system's requirements, then document every decision you make.

If you want to skip the infrastructure complexity entirely and connect your healthcare app to multiple EHRs without building custom integrations, connect your healthcare app to EHRs in days.

The Future of Patient Logistics

Exploring the future of all things related to patient logistics, technology and how AI is going to re-shape the way we deliver care.